Future CoLab 3000.

Structured AI readiness before decisions are committed

Operational reality is.

Most organisations think AI adoption starts with tools.

It does not.

It starts with decisions made before:

- the real problem is understood

- operational constraints are visible

- accountability is clear

- how work actually functions in practice

That is why so many AI initiatives stall after the initial excitement fades.

The technology is rarely the first problem.

The operating reality is.

By the time these issues become visible, momentum, budget, and political pressure make course correction difficult.

That is where my work starts.

About Andrew

I’m Andrew Privitera, founder of Future CoLab 3000.

I work with leadership teams before AI decisions are locked in, focusing on whether those decisions will hold up under real operational conditions.

This means forcing clarity on:

- what problem actually exists

- whether AI is appropriate

- and whether the organisation can realistically govern and operate it

Over 20 years working inside complex organisations as as strategic business analyst and transformation specialist shows a consistent pattern:

- technology is chosen before the problem is understood

- decisions are made before constraints are visible

- governance is added after the fact

The result is wasted budget, stalled initiatives, and frustrated teams... while leaders remain under pressure to act quickly. Most do so without a decision structure that can withstand scrutiny.

This work clarifies what must be true before AI decisions can succeed operationally:

- I define the problem before any solution is discussed

- I expose constraints that invalidate unrealistic AI ambitions

- I force clarity on what should not be done

- I align AI decisions with governance, accountability, and risk tolerance

AI decisions become explicit, testable, and defensible... before commitment.

How I work with you

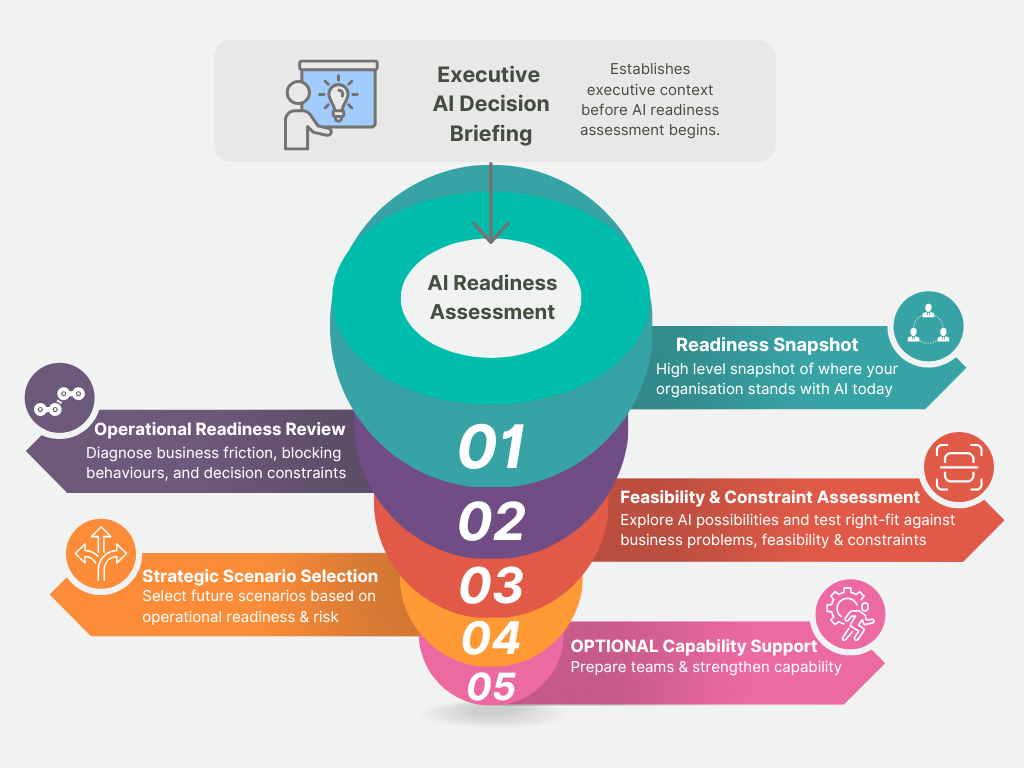

Engagements begin with a two hour Executive AI Decision Briefing for leadership teams.

This session examines:

- how AI changes organisational decision environments

- where governance and accountability shift

- how probabilistic systems differ from deterministic operations

- where augmentation and autonomy create different risks

- and why organisations commonly misjudge AI readiness

The discussion also explores how emerging AI capability is beginning to affect the organisation’s broader industry and operating environment.

Following the briefing, organisations progress into the AI Readiness Assessment process.

This structured assessment examines:

- how work actually operates

- where constraints and governance conditions exist

- where AI may or may not be realistically viable

- and what operational conditions must exist before implementation decisions are made

The focus is:

- decision quality

- operational reality

- governance clarity

- organisational readiness

- practical implementation conditions

What you achieve

What this work changes:

- problems are clarified before solutions are considered

- workflows are understood in practice, not assumed

- hidden effort and workarounds become visible

- decisions are grounded in operational reality

Most organisations don’t fail because AI doesn’t work.

They fail because decisions are made without understanding how the work actually operates.

This process corrects that.

Before AI decisions are made, organisations need to understand how work actually operates, where operational constraints exist, what level of AI is realistically feasible, and where human accountability must remain.

Most organisations move toward tools, pilots, or vendors before operational conditions, governance requirements, and decision boundaries are fully understood.

The AI Readiness Assessment process examines those conditions before implementation commitments accelerate. It is designed to assess operational suitability, governance exposure, feasibility under current conditions, and whether AI decisions are likely to hold up in practice.

Executive AI Decision Briefing

Engagements begin with a two-hour Executive AI Decision Briefing for leadership teams.

This session examines how AI changes organisational decision environments, governance conditions, accountability boundaries, and operating model assumptions before major commitments are made.

The discussion also explores how emerging AI capability is beginning to affect the organisation’s broader industry and operating environment.

01. Readiness Snapshot

Where leadership determines deeper operational assessment is warranted, the AI Readiness Assessment process begins with a Readiness Snapshot.

This short readiness questionnaire examines current AI usage, operational challenges, organisational constraints, and areas of interest before deeper assessment begins.

02. Operational Readiness Review

We examine how work currently operates to identify operational pressure points, readiness gaps, and where AI may realistically support the organisation.

The focus is on understanding how work actually operates before implementation decisions are made.

03. Feasibility & Constraint Assessment

We assess shortlisted opportunities against organisational realities to determine what is realistically achievable.

This stage determines what is realistically achievable under current conditions.

04. Strategic Scenario Selection

We evaluate practical pathways forward based on readiness, risk and organisational priorities.

The outcome is a clearer strategic direction before major AI commitments are made.

05. Optional Strategic Advisory & Capability Support

Following the readiness assessment, organisations may require additional support to translate findings into practical next steps, strengthen capability, or prepare for future AI initiatives.

Support may include:

- business case & requirements development

- independent market scanning and vendor evaluation

- governance and executive decision support

- executive and team capability uplift

The focus remains on supporting informed decisions, governance discipline, and organisational readiness.

Why this matters

Most AI initiatives fail before implementation begins.

Not because of the technology.

But because organisations move toward tools, pilots, or vendors before they fully understand:

- how work actually operates

- where operational constraints exist

- what level of AI is realistically feasible

- where human judgement must remain essential

This process is designed to assess those conditions before major AI commitments are made.

The Executive AI Briefing helps leadership teams examine operational readiness, governance exposure, and decision conditions before major AI commitments are made